ORCA's 3-Layer Memory Keeps Conversations Intact - No More Restarting

ApexORCA HQ unveils revolutionary memory system for AI agents. The 3-layer architecture enables ORCA to preserve context across days.

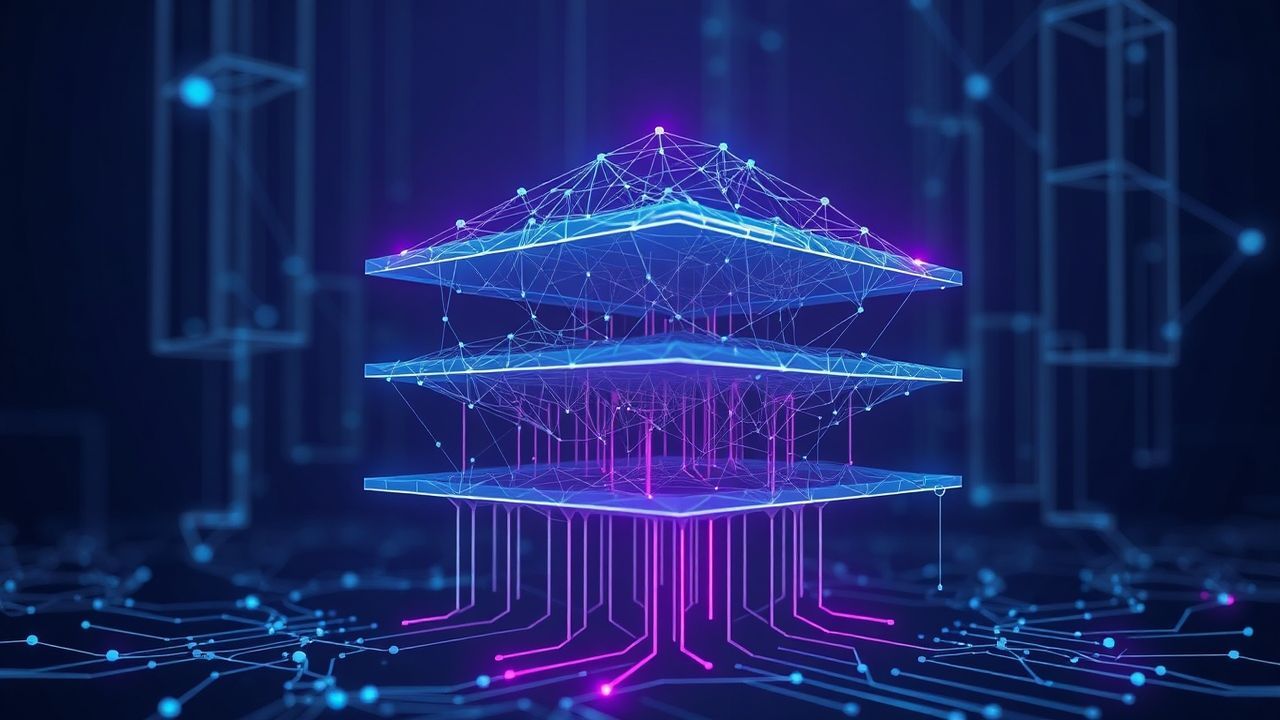

3-Layer Architecture Revolutionizes AI Memory

The ORCA platform has unveiled an innovative 3-layer memory system that fundamentally changes how AI agents interact with users. Unlike conventional systems that must reload all context with each new session, ORCA retains important information over extended periods.

How the 3-Layer System Works

The system divides memory into three distinct levels: short-term memory for current interactions, medium-term memory for recently completed tasks, and long-term memory for important recurring topics. This structure enables the AI to seamlessly switch between different time scales without losing track.

Benefits for End Users

Users no longer need to laboriously copy and paste previous conversations to restore context. The AI automatically remembers earlier requests and can build upon them to complete new tasks more efficiently. This saves time and reduces the frustration caused by repetitive explanations.

Implications for AI Development

This advancement could have far-reaching consequences for the entire AI industry. Other development teams might adopt similar architectures, potentially leading to a paradigm shift in AI interaction. The ability to maintain context over longer periods is a crucial step toward truly intelligent digital assistants.

Future Perspectives

Experts believe such memory systems will become even more sophisticated in the future and might potentially consider emotional intelligence or personal user preferences. ORCA developers are already working on extensions that aim to make the system even more human-like.